Companies proceeding with digital transformation have recently been experiencing the need to rapidly rebuild their business applications to handle changes in the business environment. Container technology is attracting interest as an execution platform for these business applications. This article presents a scalable data store that rapidly provides containers with persistent volumes. Also presented is a refinement of this data store in the form of a development concept for a next-generation data platform for Hitachi digital infrastructure. This platform was conceived in response to a rise in software-defined IT infrastructure. It enables optimum operation of business applications and securely stores their data. It should help Hitachi’s Lumada business grow in the global market.

IT companies have recently been experiencing the need to harness digital technologies and digitalized information to create previously unattainable new value by reforming their business and operations. These companies are trying to find out how they can innovate their business through careful trial-and-error study, so they need to rapidly create low-cost prototypes. Meanwhile, since analyzing these prototypes often involves handling information that drives their ability to compete in the market, many companies are resistant to having the working systems output data externally. So an essential requirement for achieving the desired business innovation is a platform that will enable rapid and on-premises development of applications supporting many repeated trial-and-error cycles over a short period.

To enable fast trial-and-error cycles, the developed applications need to be deployable in increments as small as possible and in short cycles. The platform also needs to support container technology*1 and container orchestration tools*2.

Hitachi enables these types of systems to be deployed rapidly and efficiently by providing common functions in the form of Lumada’s Digital Innovation Platform. This platform uses the Docker*3 open source software (OSS) as its container technology, and Kubernetes*4 (also OSS) as its container orchestration tool. So a data store for highly reliable and scalable storage of the data used by the applications or middleware running in these containers was needed.

The first part of this article describes the method Hitachi used to implement a highly reliable and scalable data store in Lumada. The second part presents Hitachi’s product development concept for the future.

Lumada brings together a catalog of various solutions for digital transformation (DX) in the Lumada Solution Hub (LSH). Just by selecting a solution from the catalog, LSH can use container technology to construct an entire infrastructure as a service (IaaS)-based environment and rapidly start verifying the solution. Since Kubernetes was used as the container orchestration tool in the LSH container technology, Hitachi needed to provide a storage device to supply persistent volumes (PVs) to containers in association with Kubernetes.

The LSH data store for containers needed to satisfy the following four requirements:

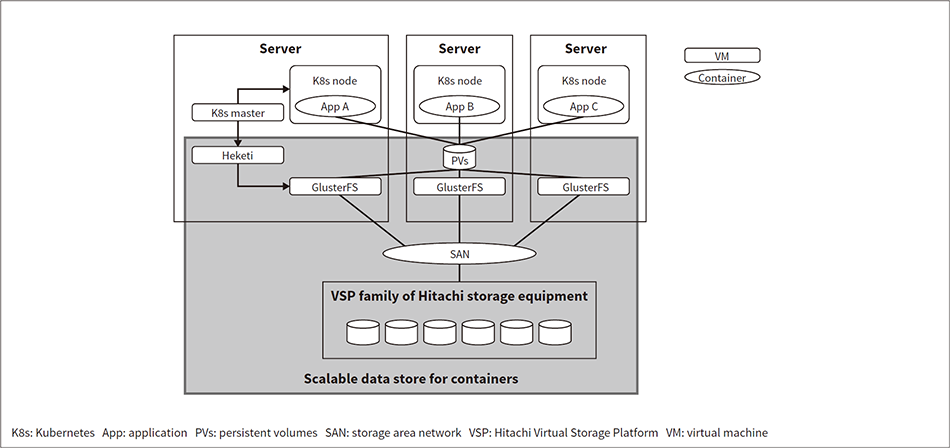

Fig. 1—Scalable Data Store for Containers

GlusterFS and Heketi were used to create a scalable data store that works with a K8s master to dynamically provide PVs to containers. To create high reliability, the data itself was placed in VSP (Hitachi storage equipment).

GlusterFS and Heketi were used to create a scalable data store that works with a K8s master to dynamically provide PVs to containers. To create high reliability, the data itself was placed in VSP (Hitachi storage equipment).

The OSS-based distributed file system GlusterFS*5 was used to handle Requirements (1) and (2). GlusterFS is used to build ecosystems together with orchestration systems such as Kubernetes, and has an extensive operation track record.

The OSS-based GlusterFS management service Heketi(4) was used to handle Requirement (3). Heketi enables Kubernetes to dynamically generate, modify, or delete GlusterFS volumes, and supply them as PVs to containers.

To handle Requirement (4), the data itself was stored in the Hitachi Virtual Storage Platform (VSP) family of storage equipment, enabling the use of Hitachi’s highly reliable, high-performance data protection.

The scalable data store for containers shown in Figure 1 was developed with these measures incorporated into it. Hitachi began providing it in July 2019.

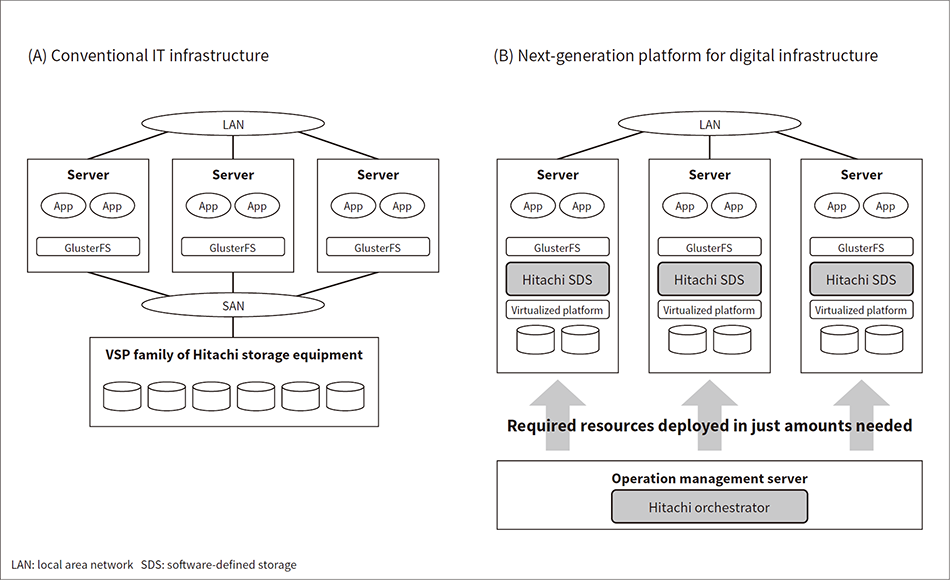

Fig. 2—Next-generation Data Platform for Digital Infrastructure

The resources of conventional IT infrastructure are tied to hardware. Hitachi’s next-generation data platform virtualizes the resources and deploys the required resources to the servers in just the amounts needed, resulting in more rapidly adaptable and flexible IT infrastructure.

The resources of conventional IT infrastructure are tied to hardware. Hitachi’s next-generation data platform virtualizes the resources and deploys the required resources to the servers in just the amounts needed, resulting in more rapidly adaptable and flexible IT infrastructure.

To adapt to radical changes in the business environment, companies have recently been experiencing the need for IT infrastructure enabling rapid and flexible modification. The use of software-defined IT infrastructure is on the rise as a way to satisfy this need. Software-defined IT infrastructure is a framework that uses virtual versions of the server, network, and storage resources that make up IT infrastructure. It uses software instead of human operators to control the deployment of these resources or to change their configuration. This approach lets companies increase the agility and flexibility of their in-house resource deployment, change configuration, and IT infrastructure adaptation to levels rivaling a public cloud.

Hitachi is working on developing a next-generation data platform for digital infrastructure that will serve as the future model for a software-defined version of the scalable data store for containers described in the previous section. Figure 2 provides an overview of Hitachi’s next-generation platform. A conventional IT infrastructure configuration is shown on the left side of the diagram. In a conventional configuration, the central processing unit (CPU), memory and storage area resources needed by business applications and distributed file systems are tied to hardware such as servers and storage devices. When additional resources are needed, the administrator needs to decide which and how many of these resources are needed, and manually perform the installation or configuration work. And since various different types of hardware are needed, it takes time and effort to procure each item separately.

Hitachi’s next-generation data platform will virtualize resources by using virtual platforms such as hypervisors*6 or containers. An operation management software application called an orchestrator*7 will then predict the resources needed in the future and deploy the required resources to the servers in just the required amounts. This approach will let companies increase the agility and flexibility of their in-house resource deployment, change configuration, and IT infrastructure adaptation.

Virtual desktop infrastructure (VDI) is one possible application of Hitachi’s next-generation data platform. Demand for VDI is rising as telecommuting becomes more widespread amid work style reforms and efforts to prevent COVID-19 transmission. The number of employees using VDI changes on a daily basis, but Hitachi’s next-generation data platform will be able to rapidly and flexibly adapt to the changes.

The platform will use software running on general-purpose servers to provide storage functions—a software application known as software-defined storage (SDS). Hitachi has developed its own SDS application (Hitachi software-defined storage) that offers the following benefits:

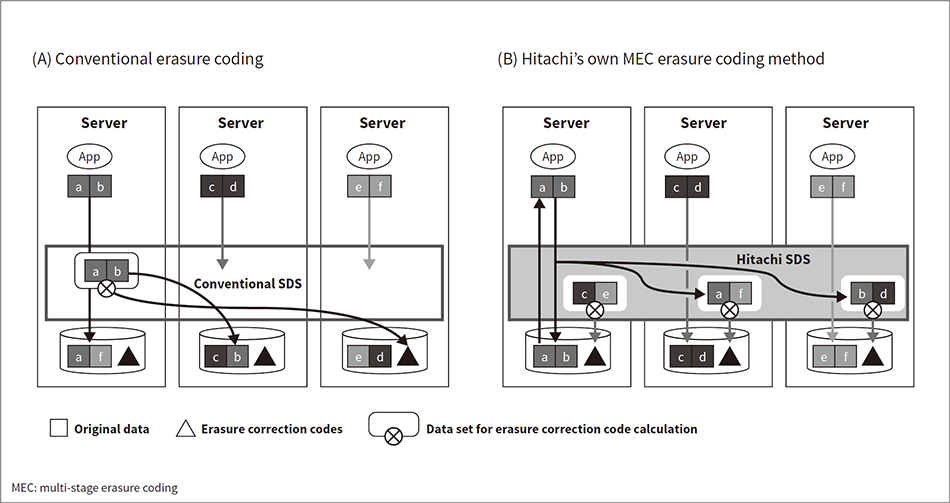

Fig. 3—Hitachi’s Own MEC Erasure Coding Method Conventional erasure coding methods suffer from lower performance by generating communication between nodes when reading data. MEC stores all the original data on the local drive. It also generates erasure correction codes from the data on the local server and other servers. This approach provides a good balance between performance and error tolerance.

Conventional erasure coding methods suffer from lower performance by generating communication between nodes when reading data. MEC stores all the original data on the local drive. It also generates erasure correction codes from the data on the local server and other servers. This approach provides a good balance between performance and error tolerance.

To solve this problem, Hitachi has developed its own erasure coding method called multi-stage erasure coding (MEC)(5). Figure 3 shows the differences between conventional erasure coding and MEC. MEC stores all the original data on the local drive, speeding up reading and writing. It also generates erasure correction codes using local server data and data sent from other servers. These features create a good balance between performance and error tolerance.

Hitachi SDS comes with MEC along with functions enabling maintenance work and drive upgrades or other configuration changes during operation. These features give Hitachi SDS higher availability and data reading performance while using general-purpose servers as hardware.

By giving Hitachi’s next-generation data platform rapid agility, high availability, and high performance, the benefits described here let the platform provide software-defined IT infrastructure possible only from Hitachi.

This article has presented a scalable data store for the containers that underpin LSH, along with the development concept for a next-generation data platform for Hitachi digital infrastructure that refines this scalable data store in response to the rise in software-defined IT infrastructure. Hitachi’s next-generation data platform will enable optimum operation of the applications and middleware used to create solutions in Digital Innovation Platform. The next-generation data platform will also be refined into hyper-converged infrastructure that will enable secure storage of the data of these applications and middleware, helping Hitachi grow its Lumada business in the global market.